Researchers are working towards a future where robots can assist people who need help getting dressed.

Data from the National Center for Health Statistics reveals that 92% of nursing facility residents and at-home care patients require assistance with dressing, a daily activity easily taken for granted by many.

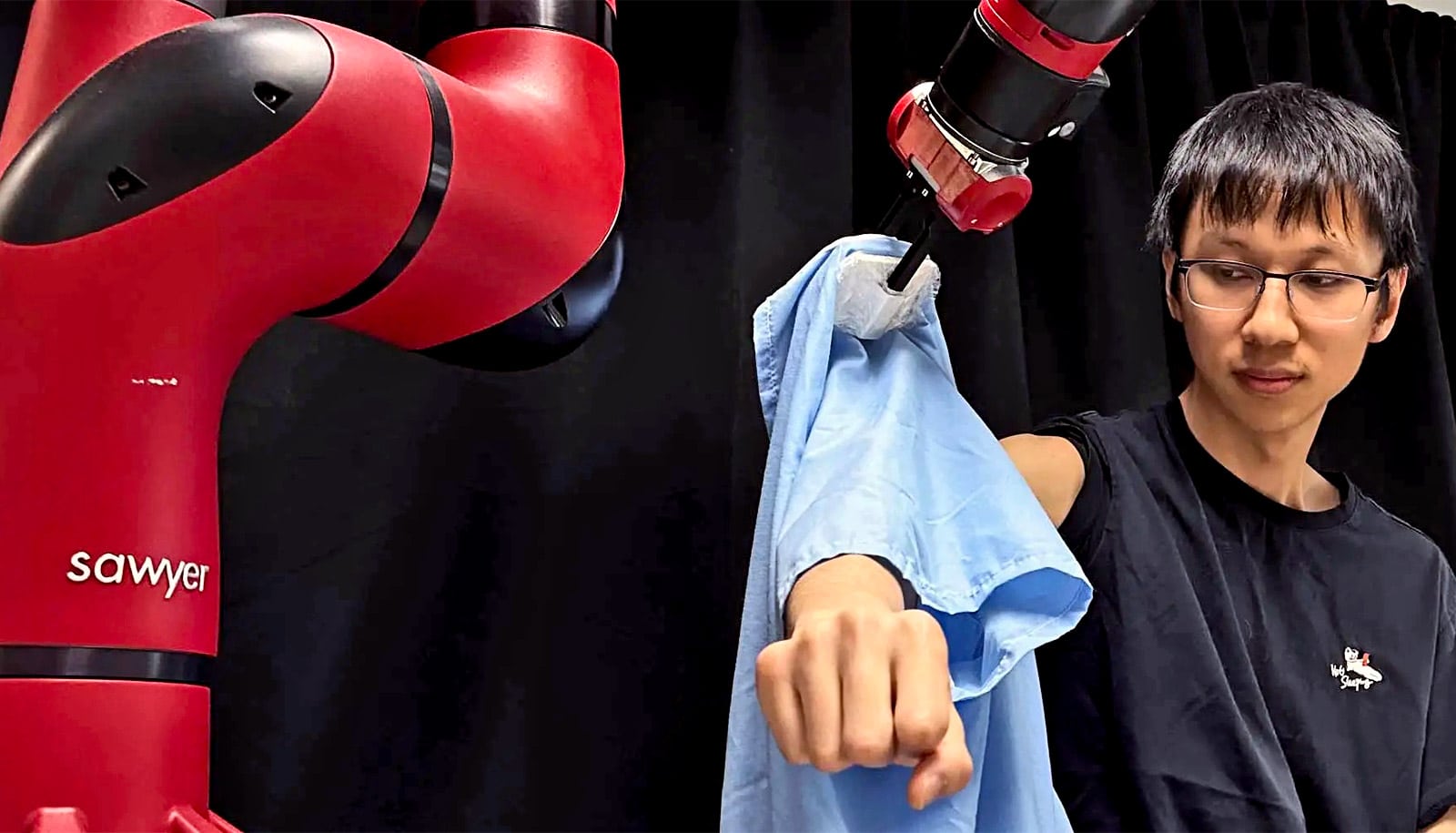

“Remarkably, existing endeavors in robot-assisted dressing have primarily assumed dressing with a limited range of arm poses and with a single fixed garment, like a hospital gown,” says Yufei Wang, a Carnegie Mellon University’s Robotics Institute (RI) PhD student working on a robot-assisted dressing system.

“Developing a general system to address the diverse range of everyday clothing and varying motor function capabilities is our overarching objective. We also want to extend the system to individuals with different levels of constrained arm movement.”

The robot-assisted dressing system leverages the capabilities of artificial intelligence to accommodate various human body shapes, arm poses, and clothing selections. The team’s research used reinforcement learning—rewards for accomplishing certain tasks—to achieve their general dressing system. Specifically, the researchers gave the robot a positive reward each time it properly placed a garment further along a person’s arm. Through continued reinforcement, they increased the system’s learned-dressing strategy success rate.

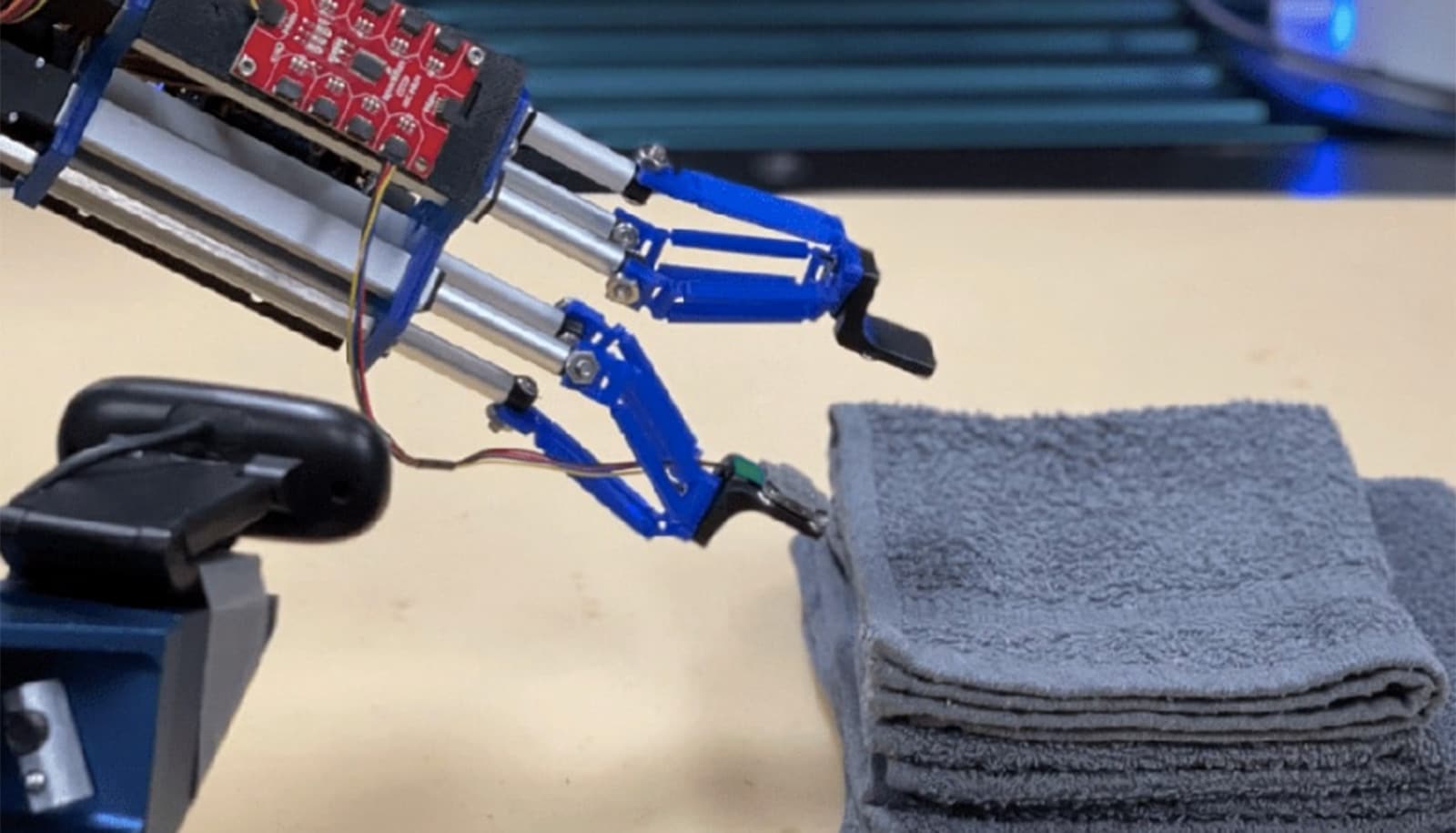

The researchers used a simulation to teach the robot how to manipulate clothing and dress people. The team had to carefully deal with the properties of the clothing material when transferring the strategy learned in simulation to the real world.

“In the simulation phase, we employ deliberately randomized diverse clothing properties to guide the robot’s learned dressing strategy to encompass a broad spectrum of material attributes,” says Zhanyi Sun, a master’s student who also worked on the project. “We hope the randomly varied clothing properties in simulation encapsulate the garments’ property in the real world, so the dressing strategy learned in simulation environments can be seamlessly transferred to the real world.”

The researchers evaluated the robotic dressing system in a human study with 510 dressing trials across 17 participants with different body shapes, arm poses, and five garments. For most participants, the system was able to fully pull the sleeve of each garment onto their arm. When averaged over all test cases, the system dressed 86% of the length of the participants’ arms.

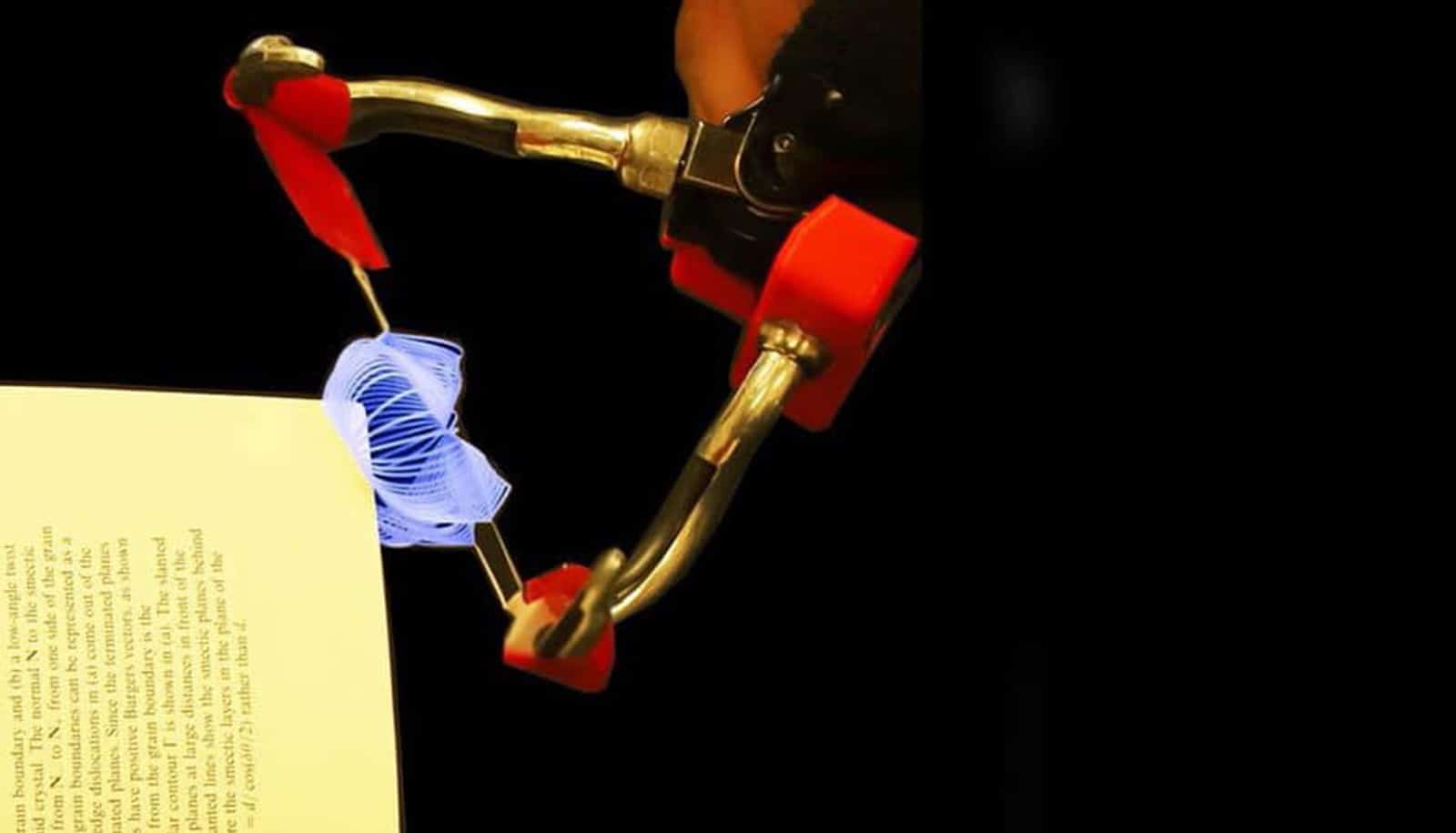

The researchers had to consider several challenges when designing their system. First, clothes are deformable in nature, making it difficult for the robot to perceive the full garment and predict where and how it will move.

“Clothes are different from rigid objects that enable state estimation, so we have to use a high-dimensional representation for deformable objects to allow the robot to perceive the current state of the clothes and how they interact with the human’s arm,” Wang says. “The representation we use is called a segmented point cloud. It represents the visible parts of the clothes as a set of points.”

Safe human-robot interaction was also crucial. It was important that the robot avoid both applying excessive force to the human arm and any other actions that could cause discomfort or compromise the individual’s safety. To mitigate these risks, the team rewarded the robot for gentle conduct.

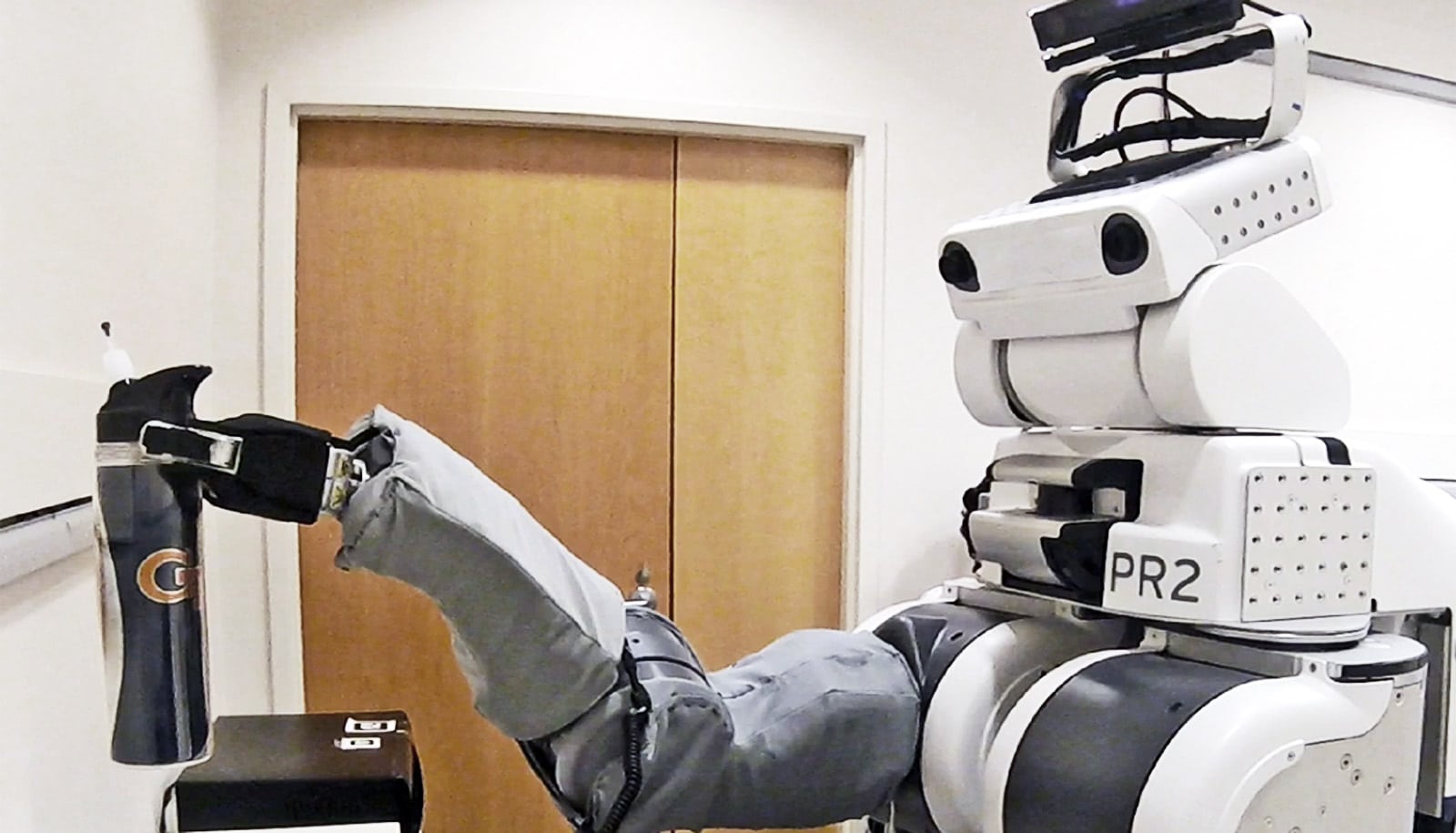

Future research could head in several directions. For example, the team wants to expand the capabilities of the current system by enabling it to put a jacket on both of a person’s arms or pull a T-shirt over their head. Both tasks require more complex design and execution. The team also hopes to adapt to the human’s arm movements during the dressing process and to explore more advanced robot manipulation skills such as buttoning or zipping.

As the work progresses, the researchers intend to perform observational studies within nursing facilities to gain insight into the diverse needs of individuals and improvements that need to be made to their current assistive dressing system.

Wang and Sun recently presented their research at the Robotics: Science and Systems conference. The students are advised by Zackory Erickson, assistant professor in the RI and head of the Robotic Caregiving and Human Interaction (RCHI) Lab; and David Held, associate professor in the RI leading the Robots Perceiving And Doing (RPAD) research group.

Source: Carnegie Mellon University