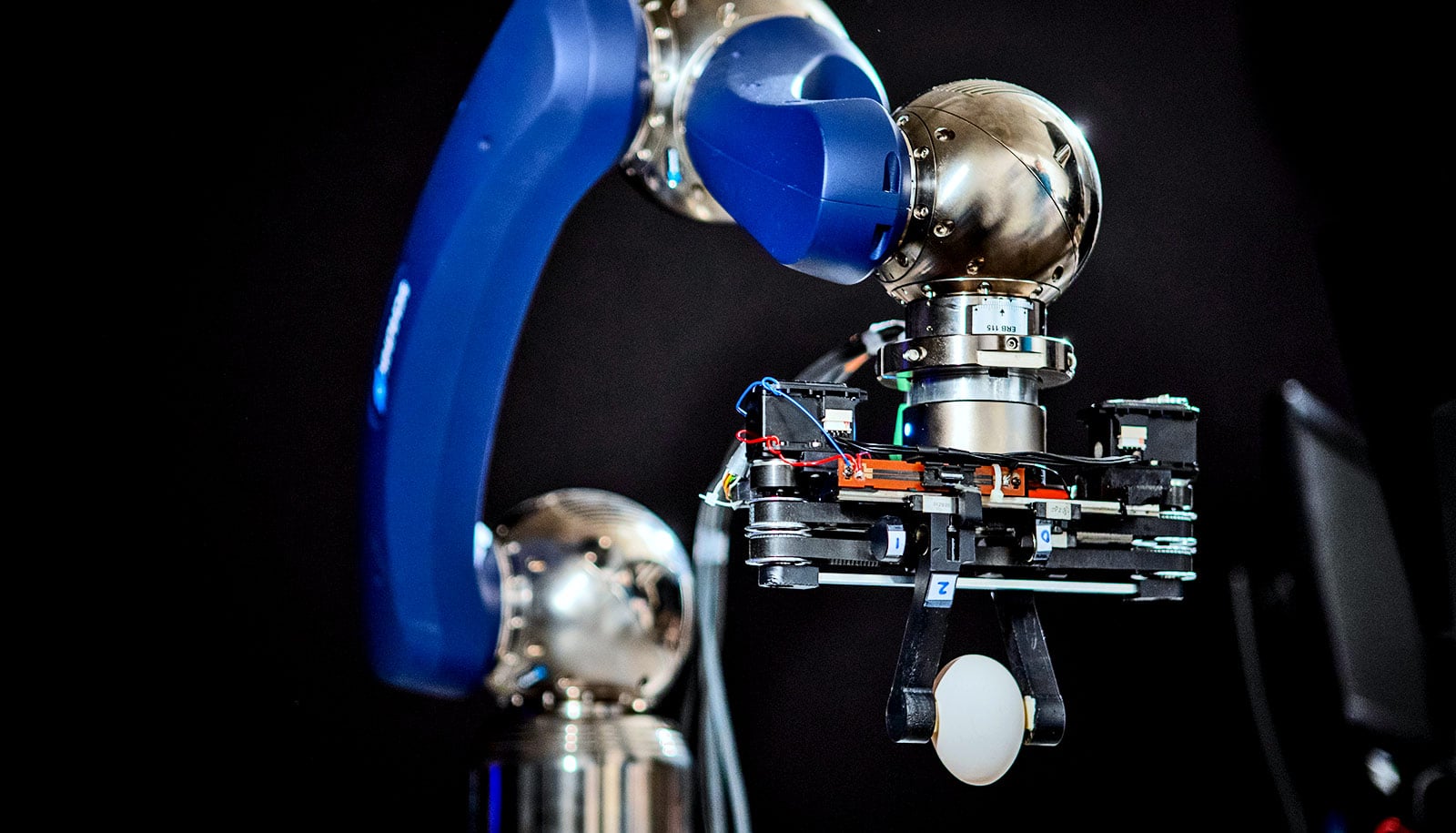

A new way to teach kitchen robots how to pick up transparent or reflective objects uses just a color camera, report researchers.

Kitchen robots are a popular vision of the future, but if a robot of today tries to grasp a clear measuring cup or a shiny knife, it likely won’t be able to. Transparent and reflective objects are the things of robot nightmares.

Depth cameras, which shine infrared light on an object to determine its shape, work well for identifying opaque objects. But infrared light passes right through clear objects and scatters off reflective surfaces, says David Held, an assistant professor in Carnegie Mellon University’s Robotics Institute.

Thus, depth cameras can’t calculate an accurate shape, resulting in largely flat or hole-riddled shapes for transparent and reflective objects.

But a color camera can see transparent and reflective objects as well as opaque ones. So roboticists developed a color camera system to recognize shapes based on color.

A standard camera can’t measure shapes like a depth camera, but the researchers nevertheless could train the new system to imitate the depth system and implicitly infer shape to grasp objects. They did so using depth camera images of opaque objects paired with color images of those same objects.

Once trained, they applied the color camera system to transparent and shiny objects. Based on those images, along with whatever scant information a depth camera could provide, the system could grasp these challenging objects with a high degree of success.

“We do sometimes miss,” Held says, “but for the most part it did a pretty good job, much better than any previous system for grasping transparent or reflective objects.”

The system can’t pick up transparent or reflective objects as efficiently as opaque objects, says Thomas Weng, a PhD student in robotics. But it is far more successful than depth camera systems alone.

And the multimodal transfer learning used to train the system was so effective that the color system proved almost as good as the depth camera system at picking up opaque objects.

“Our system not only can pick up individual transparent and reflective objects, but it can also grasp such objects in cluttered piles,” he says.

Other attempts at robotic grasping of transparent objects have relied on training systems based on exhaustively repeated attempted grasps—on the order of 800,000 attempts—or on expensive human labeling of objects.

The new system uses a commercial RGB-D camera that’s capable of both color images (RGB) and depth images (D). The system can use this single sensor to sort through recyclables or other collections of objects—some opaque, some transparent, some reflective.

The researchers will present the system this summer at the International Conference on Robotics and Automation virtual conference.

Additional coauthors are from BITS Pilani in India, ShanghaiTech, and Carnegie Mellon. The National Science Foundation, Sony Corporation, the Office of Naval Research, Efort Intelligent Equipment Co., and ShanghaiTech supported the work.

Source: Carnegie Mellon University