Researchers have created a new camera that could create four-dimensional images and capture nearly 140 degrees of information.

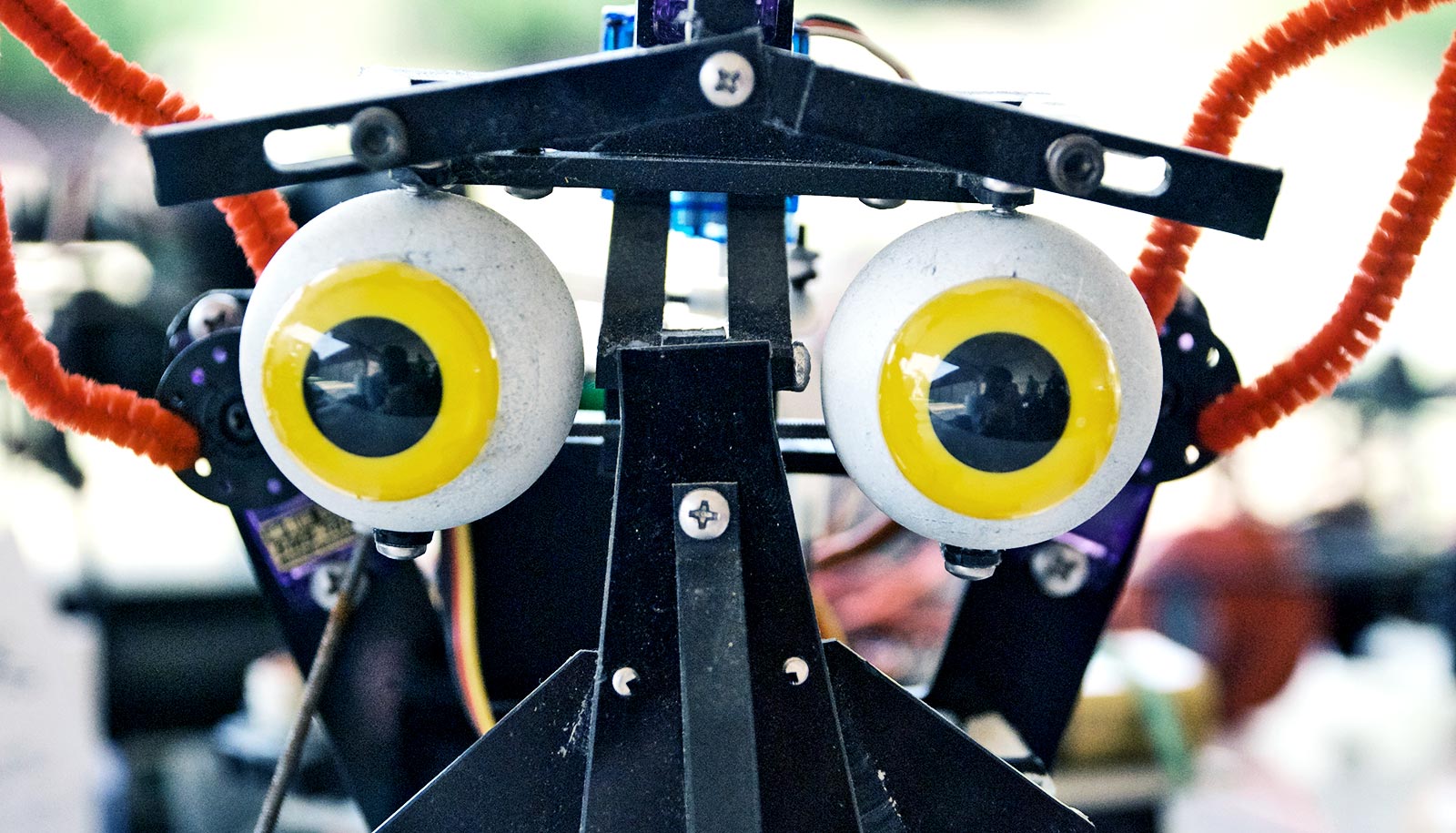

“We’re great at making cameras for humans but do robots need to see the way humans do? Probably not…”

The camera could generate the kind of information-rich images that robots need to navigated the world.

“We want to consider what would be the right camera for a robot that drives or delivers packages by air. We’re great at making cameras for humans but do robots need to see the way humans do? Probably not,” says Donald Dansereau, a postdoctoral fellow in electrical engineering at Stanford University.

With robotics in mind, Dansereau and Gordon Wetzstein, an assistant professor of electrical engineering, along with colleagues from the University of California, San Diego have created the first-ever single-lens, wide field of view, light field camera. The researchers presented their work at the computer vision conference CVPR 2017.

As technology stands now, robots have to move around, gathering different perspectives, if they want to understand certain aspects of their environment, such as movement and material composition of different objects. This camera could allow them to gather much the same information in a single image. The researchers also see this being used in autonomous vehicles and augmented and virtual reality technologies.

“It’s at the core of our field of computational photography,” says Wetzstein. “It’s a convergence of algorithms and optics that’s facilitating unprecedented imaging systems.”

Peephole vs. window

The difference between looking through a normal camera and the new design is like the difference between looking through a peephole and a window, the scientists says.

“A 2D photo is like a peephole because you can’t move your head around to gain more information about depth, translucency, or light scattering,” Dansereau says. “Looking through a window, you can move and, as a result, identify features like shape, transparency, and shininess.”

That additional information comes from a type of photography called light field photography, which professors Marc Levoy and Pat Hanrahan of Stanford first described in 1996.

Light field photography captures the same image as a conventional 2D camera plus information about the direction and distance of the light hitting the lens, creating what’s known as a 4D image. A well-known feature of light field photography is that it allows users to refocus images after they are taken because the images include information about the light position and direction. Robots might use this to see through rain and other things that could obscure their vision.

Toilet or chair? Robots that ‘see’ in 3D can tell

The extremely wide field of view, which encompasses nearly a third of the circle around the camera, comes from a specially designed spherical lens. However, this lens also produced a significant hurdle: how to translate a spherical image onto a flat sensor.

Previous approaches to solving this problem had been heavy and error prone, but combining optics and fabrication expertise and the signal processing and algorithmic expertise of Wetzstein’s lab resulted in a digital solution to this problem that not only leads to the creation of these extra-wide images but enhances them.

This camera system’s wide field of view, detailed depth information, and potential compact size are all desirable features for imaging systems incorporated in wearables, robotics, autonomous vehicles, and augmented and virtual reality.

“It could enable various types of artificially intelligent technology to understand how far away objects are, whether they’re moving and what they’ve made of,” says Wetzstein. “This system could be helpful in any situation where you have limited space and you want the computer to understand the entire world around it.”

Although it can also work like a conventional camera at far distances, this camera is designed to improve close-up images. Examples where it would be particularly useful include robots that have to navigate through small areas, landing drones, and self-driving cars.

As part of an augmented or virtual reality system, its depth information could result in more seamless renderings of real scenes and support better integration between those scenes and virtual components.

Program makes robots better listeners

The camera is currently a proof-of-concept and the team is planning to create a compact prototype next. That version would hopefully be small enough and light enough to test on a robot. A camera that humans could wear could be soon to follow.

“Many research groups are looking at what we can do with light fields but no one has great cameras. We have off-the-shelf cameras that are designed for consumer photography,” says Dansereau.

“This is the first example I know of a light field camera built specifically for robotics and augmented reality. I’m stoked to put it into peoples’ hands and to see what they can do with it,” he says.

Additional coauthors of the paper are from the University of California, San Diego. This research was funded by the NSF/Intel Partnership on Visual and Experiential Computing and DARPA.

Source: Stanford University