Natural language processing could help pediatricians draw the fine line between a teen’s right to privacy and a parent’s right to know.

Two laws, one new, the other on the books since the 1980s, have complicated the lives of pediatricians. The federal government’s 21st Century Cures Act, among its many mandates, requires physicians nationwide make available to patients their complete electronic medical records. With the click of a mouse, all personal health information, test results, prescribed medications, and clinical notes must be accessible digitally for patient review.

Meanwhile, a confidentiality law in California simultaneously demands that pediatricians protect the privacy of their adolescent patients. That is, by law they must not divulge to parents certain details about their dependent child’s mental health, sexual history, drug use, and other confidential information.

“Most states in the country have some form of confidentiality laws, so there is a conflict between the full disclosure in the federal law and the privacy protections of the state laws,” says Keith Morse, a pediatrician who leads the Translational Data Science at Packard (TDaP) program. TDaP is specifically charged with exploring the rapidly emerging applications of machine learning to clinical care at Lucile Packard Children’s Hospital.

What qualifies as confidential for a given patient is left up to the doctor’s interpretation and therein lies a problem. Doctors often disagree about such matters and now those subtle distinctions are weighted not only with medical consequence but with legal consequence, as well.

Into that maw have leapt Morse and a team of medical doctors, bioinformatics experts, and computer scientists from Stanford University who have tapped artificial intelligence to help pediatricians flag potentially confidential information in their clinical notes for closer review to ensure compliance with both laws. The authors introduced their algorithm in a peer-reviewed paper that has been submitted for publication, and it is already in use by pediatricians in the Stanford Children’s Health community.

The fundamental issue is trust.

“We want the patients, in this case teens, to feel that they can be fully open and honest with their doctors without worrying that their parents might learn personal details they would rather their parents not know,” Morse says. “But the parents have an interest in their child’s medical history, too.”

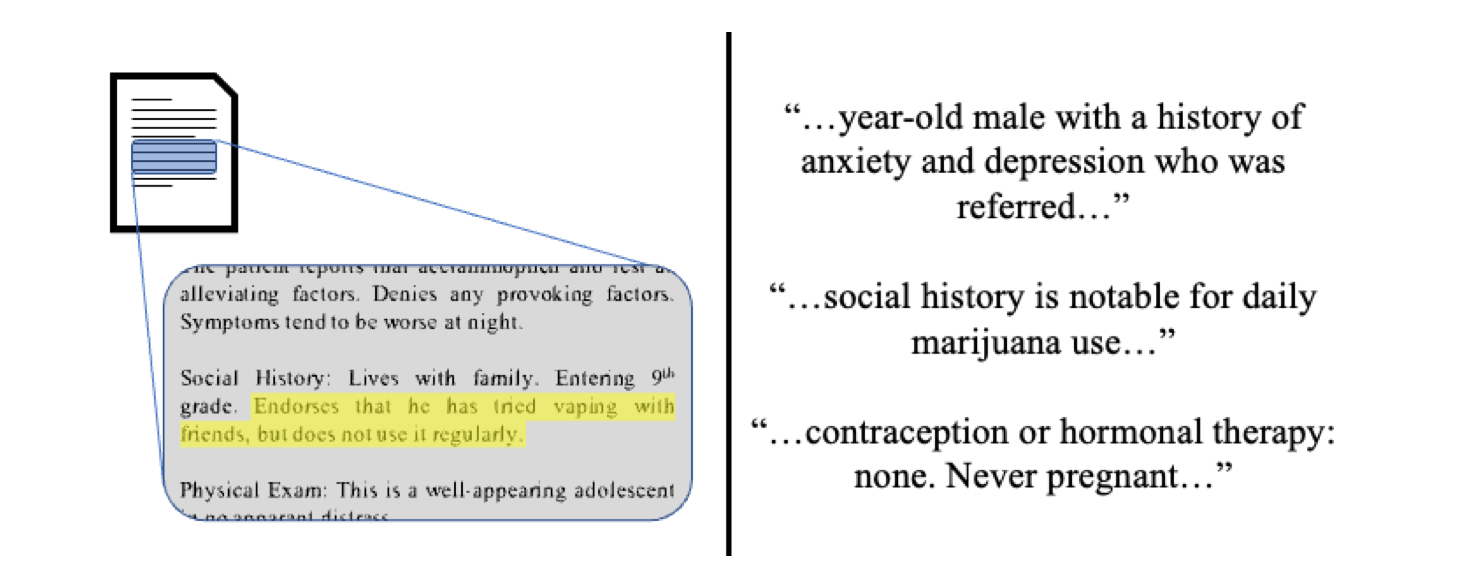

The algorithm does not redact confidential information from the medical record, but rather uses natural language processing to compare clinical note content against a database to “flag” phrases, sentences, and paragraphs that it thinks might be at risk of violating confidentiality laws. The algorithm then calculates a “risk score” to help physicians make their confidentiality determinations, improve compliance with the applicable laws, and expedite the manual review process.

“It’s a very fine needle we have to thread here,” adds Naveed Rabbani, an engineer turned pediatrician at Stanford Medicine and lead author of the study. “Artificial intelligence can help make that key determination between what is confidential and what is necessary to provide the patient the very best medical care we can offer.”

Doctors’ notes and teen privacy

To build their algorithm and dataset, the researchers enlisted a team of five physicians to evaluate a randomly selected trove of clinical notes from 1,200 adolescent patients ages 12 through 17. The physicians who had been specifically trained in the nuances of California’s adolescent confidentiality laws annotated the dataset based on that knowledge. In their analysis the researchers estimate that slightly more than 20% of clinical notes for adolescents contain confidential information, indicating the issue is worthy of concern.

The 1,200 records were disbursed evenly among the five doctors, with some overlap. Not all doctors agree on what constitutes “confidential” information, and the overlap helped the AI research team identify the interpretive gray areas.

The algorithm then evaluated new, never-before-seen clinical notes for potential concerns, where it calculated the risk score and flagged content for the doctor’s review.

“The biggest surprise to me in this research was just how hard it is to determine confidentiality,” Rabbani says. “The best care and patient disclosure are sometimes contradictory goals. That’s where AI really helps.”

Real-time analysis

The researchers anticipate that such an algorithm, if it was to achieve wider use, would work in real time as the doctor is entering notes—flagging content for immediate categorization as the notes are being transcribed.

With potential conflicts flagged and graded by confidentiality risk, the physician can quickly make an informed determination and either leave the note open to both parent and child or isolate confidential information in special note type that appears only when the minor patient reviews the electronic health record.

At this point however, the resulting algorithm is a proof-of-concept prototype that demonstrates both AI’s skill at discerning nuances in written language and its ability to expedite the physician’s annotation process while helping to abide by legal confidentiality requirements.

One impediment to wider use is that the dataset was based on clinical notes culled from the Stanford medical community. To be reliable universally, the researchers anticipate that future versions would need to be trained on notes from many other institutions, as note-taking style varies hospital to hospital as do confidentiality laws state to state.

On the upside, they believe that their results indicate their AI methods might be transferrable to other areas of medicine, notably reproductive health in light of the recent ruling overturning abortion protections, but also adult histories of HIV infection, sickle cell anemia, and substance use, among others.

Source: Stanford University