Computer models of artificial neurons may shed light on what a real neuron does to save energy that a contemporary computer processing unit doesn’t, researchers say.

When it fires, a neuron consumes significantly more energy than an equivalent computer operation. And yet, a network of coupled neurons can continuously learn, sense, and perform complex tasks at energy levels that are currently unattainable for even state-of-the-art processors.

Using simulated silicon “neurons,” the researchers found that energy constraints on a system, coupled with the intrinsic property neurons have to move to the lowest-energy configuration, leads to a dynamic, at-a-distance communication protocol that is both more robust and more energy-efficient than traditional computer processors.

The research appears in the journal Frontiers in Neuroscience.

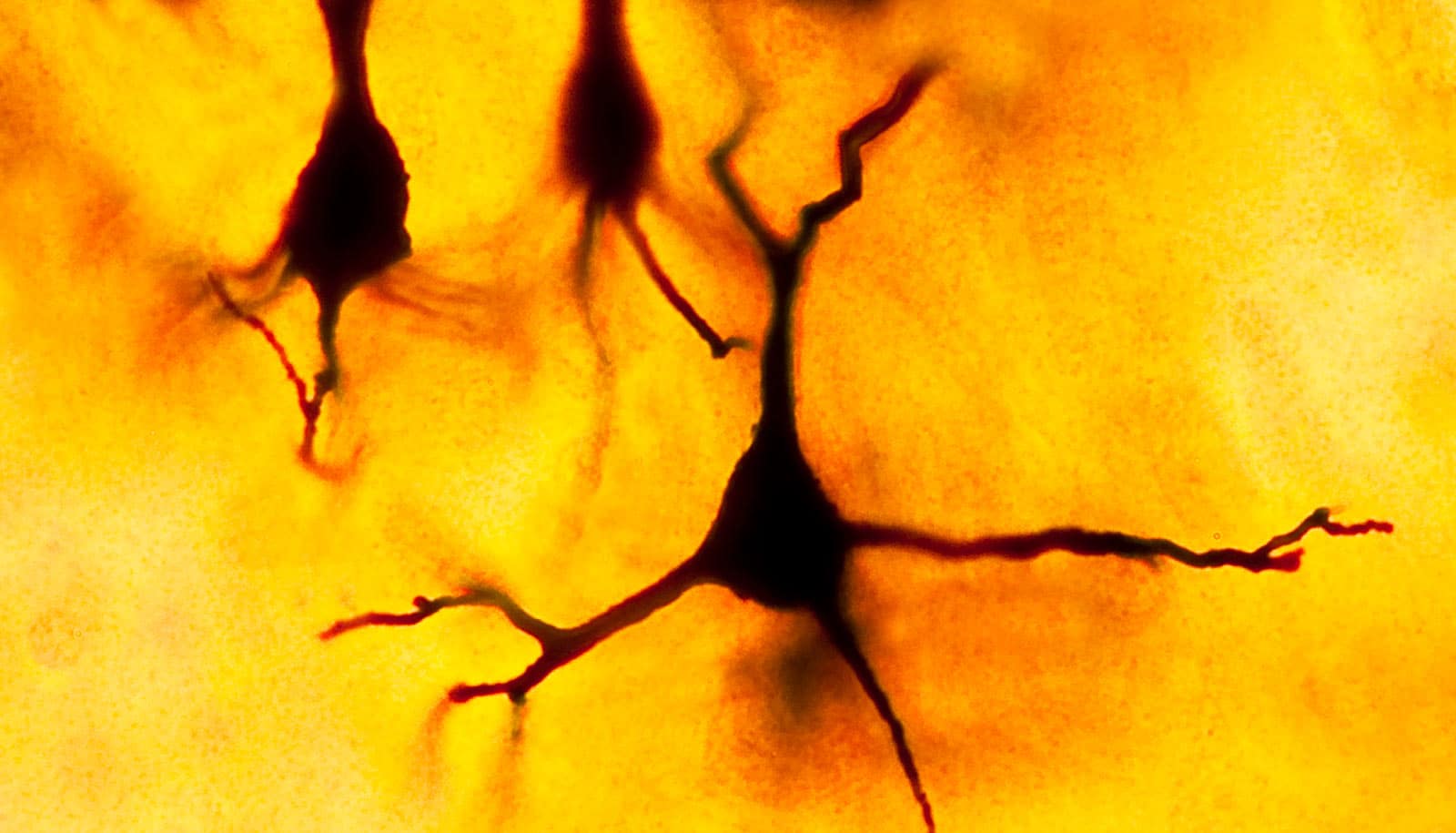

Computer emulation of fruit fly sub-connectome (Credit: Chakrabartty lab)

Less is more

It’s a case of doing more with less.

Lead author Ahana Gangopadhyay, a doctoral student in Shantanu Chakrabartty’s lab at the Washington University in St. Louis’ McKelvey School of Engineering, has been investigating computer models to study the energy constraints on silicon neurons—artificially created neurons, connected by wires, that show the same dynamics and behavior as the neurons in our brains.

Like biological neurons, their silicon counterparts also depend on specific electrical conditions to fire, or spike. These spikes are the basis of neuronal communication, zipping back and forth, carrying information from neuron to neuron.

The researchers first looked at the energy constraints on a single neuron. Then a pair. Then, they added more.

“We found there’s a way to couple them where you can use some of these energy constraints, themselves, to create a virtual communication channel,” says Chakrabartty, professor in the systems and electrical engineering department.

A group of neurons operates under a common energy constraint. So, when a single neuron spikes, it necessarily affects the available energy—not just for the neurons it’s directly connected to, but for all others operating under the same energy constraint.

A virtual bug brain?

Li Xiang and Zeheng Song, undergraduate students in Chakrabartty’s lab, have been able to import a connectome—a representation of an actual, biological assembly of neurons—and emulate its dynamics using their model and about 10 million silicon neurons.

“A bug’s brain has about 1 million neurons,” Chakrabartty says. “We just don’t fully understand its connectivity, but in theory, we should be able to emulate a bug’s brain completely.”

Spiking neurons thus create perturbations in the system, allowing each neuron to “know” which others are spiking, which are responding, and so on. It’s as if the neurons were all embedded in a rubber sheet; a single ripple, caused by a spike, would affect them all. And like all physical processes, systems of silicon neurons tend to self-optimize to their least-energetic states while the other neurons in the network also affect them.

These constraints come together to form a kind of secondary communication network, where additional information can be communicated through the dynamic but synchronized topology of spikes. It’s like the rubber sheet vibrating in a synchronized rhythm in response to multiple spikes.

This topology carries with it information that is communicated, not just to the neurons that are physically connected, but to all neurons under the same energy constraint, including ones that are not physically connected.

Under the pressure of these constraints, Chakrabartty says, “They learn to form a network on the fly.”

This makes for much more efficient communication than traditional computer processors, which lose most of their energy in the process of linear communication, where neuron A must first send a signal through B in order to communicate with C.

Using these silicon neurons for computer processors gives the best efficiency-to-processing speed tradeoff, Chakrabartty says. It will allow hardware designers to create systems to take advantage of this secondary network, computing not just linearly, but with the ability to perform additional computing on this secondary network of spikes.

The immediate next steps, however, are to create a simulator that can emulate billions of neurons. Then researchers will begin the process of building a physical chip.

Support for the work came, in part, from the National Science Foundation.