The structure of our retinas is miles ahead of anything human engineering can achieve so far, report researchers.

If you wanted to design the most perfect, low-energy, light-detecting device for a future camera or a prosthetic retina, you’d reach for something called “efficient coding theory,” to set out the array of sensors.

Or you could just look at a mammalian retina.

In a pair of papers on retinal structure, neurobiologists show that the rigors of natural selection and evolution have shaped the retinas in our eyes just as this theory of optimization would predict.

In a previous paper published last March in Nature, the researchers showed that rat and monkey retinas are laid out in patterns of sensitivity that mimic what efficient coding theory would predict. Different sets of retinal neurons are sensitive to individual stimuli: bright, dark, moving, and so on, and they’re arranged in a three-dimensional mosaic of cells that works to add up the image.

Now, in a paper in the Proceedings of the National Academy of Sciences, “we set out to understand that, through a lot of simulation and a little bit of pencil and paper math,” says John Pearson, an assistant professor of biostatistics and bioinformatics in the Duke University School of Medicine. “The mosaics don’t just randomly overlap, but they don’t overlap in a highly ordered way.”

“We’re making a prediction about how literally thousands of cells of multiple different types arrange themselves across space,” says Greg Field, an assistant professor of neurobiology in the Duke School of Medicine.

“The monkey retina and our retinas are nearly indistinguishable,” he says. “The fact that we observed this in the monkey retina gives us incredible confidence that our retinas are laid out in the same way.”

Inside the retina

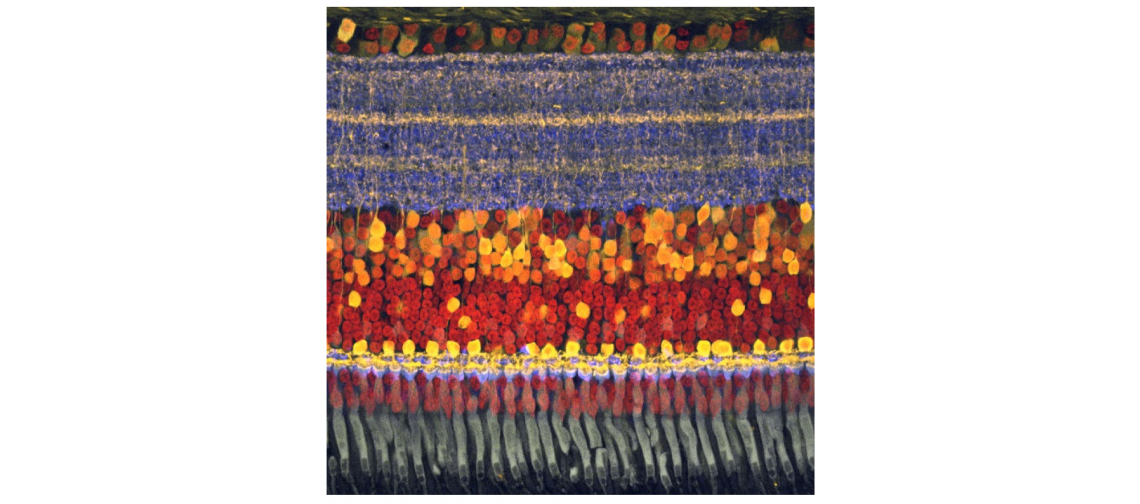

In a cross-section of the retina, the bodies of the ganglion cells, round orbs that contain the nucleus, line up in a layer together, but they extend their tree-like, branching dendrites into a thick layer that looks like the tangled roots of a pot-bound houseplant. It’s in this thicker, spectacularly complex layer that mosaics of different sensitivities are laid out in ordered patterns.

The ganglion cells below the dendrite layer just output ones and zeros, essentially. The sensitivity comes from the mosaic itself. And that mosaic is not only laid out optimally, it adapts to current conditions.

“The retina is not one mosaic. It’s a whole bunch of stacked mosaics. And each of these mosaics encodes something different about the visual field,” Field says. The mammalian retina parses some 40 different visual features.

“The depth that the dendrites reach in the retina is kind of like an addressing scheme, where if you’re deeper, you get one kind of information,” Field says. “If it’s more shallow, it gets a different kind of information. In fact, the deeper ones get the ‘off’ signals, and the more shallow ones get the ‘on’ signals. So you can have many detectors sampling the same place in the visual world, because they’re using depth to convey different kinds of signals,” Field says.

Avoiding the ‘noise’

One reason the array is so efficient is that the cells conserve energy by not responding to some stimuli. In a very dark room, the environment is “noisy” for the receptors, so they tune out most of the static and only respond to something that’s quite bright.

“The more noise there is in the world, the pickier the cell can be about what it will respond to,” Pearson says. “And when they get pickier, it turns out that there’s less redundancy in them. And so you can deploy them in ways that don’t have to overlap anymore.”

If there were never any noise in the visual environment, the mosaics of detectors would be aligned on top of each other, explains graduate student Na Young Jun, who is the first author of one of the papers and a coauthor of the other. But she computationally modeled 168 different noise conditions and found that the higher the noise, the greater the offset between detectors.

In a living mammalian retina, the team found the mosaics are offset just as the theory would predict, meaning the retina is optimized to deal with higher noise conditions.

If you’re a small, delicious woodland creature like a mouse, “your survival doesn’t hinge so much on the things that are easy to see,” Field says. “It hinges on the things that are hard to see. And so the retina is really geared toward being optimized to detect those things that are hard to see.”

“This is an important design feature to incorporate in any kind of retinal prosthetic that you’d want to build,” Field says. But getting this idea into a smartphone may take a while. For one thing, the retina is alive and self-assembled, and it adapts and changes with time.

Our retinas vs. smartphone sensors

The energy consumption of the human retina is also orders of magnitude less than even the best smartphone sensor at the moment, Jun says.

For example, the 5-megapixel, 1/5th of an inch OmniVision OV5675 smartphone image sensor consumes 1.92×10-10 Watts. The human retina is conservatively estimated to consume about 6% of that (1.27×10-11 Watts in bright light). In dim conditions, the eye’s energy consumption goes up to about 5.08×10-11, but it also captures single photons that no smartphone camera ever could.

The next feature of the system the team would like to tackle is the element of time—differences in the response times of retinal cells that add up to form a sense of motion, or an interpretation of moving images. Some of it, Jun says, will be dependent on the speed at which individual detectors fire.

Funding for the research came from the National Eye Institute of the US National Institutes of Health, a Ruth K. Broad postdoctoral fellowship, and the Whitehead Scholars Program.

Source: Duke University