Tactile feedback on the skin doubled the ability of blindfolded users of a prosthetic hand to discern the size of objects they picked up.

“Humans have an innate sense of how the parts of their bodies are positioned, even if they can’t see them,” says Marcia O’Malley, professor of mechanical engineering at Rice University. “This ‘muscle sense’ is what allows people to type on a keyboard, hold a cup, throw a ball, use a brake pedal, and do countless other daily tasks.”

The scientific term for this muscle sense is proprioception, and O’Malley’s Mechatronics and Haptic Interfaces Lab (MAHI) has worked for years to develop technology that would allow amputees to receive proprioceptive feedback from artificial limbs.

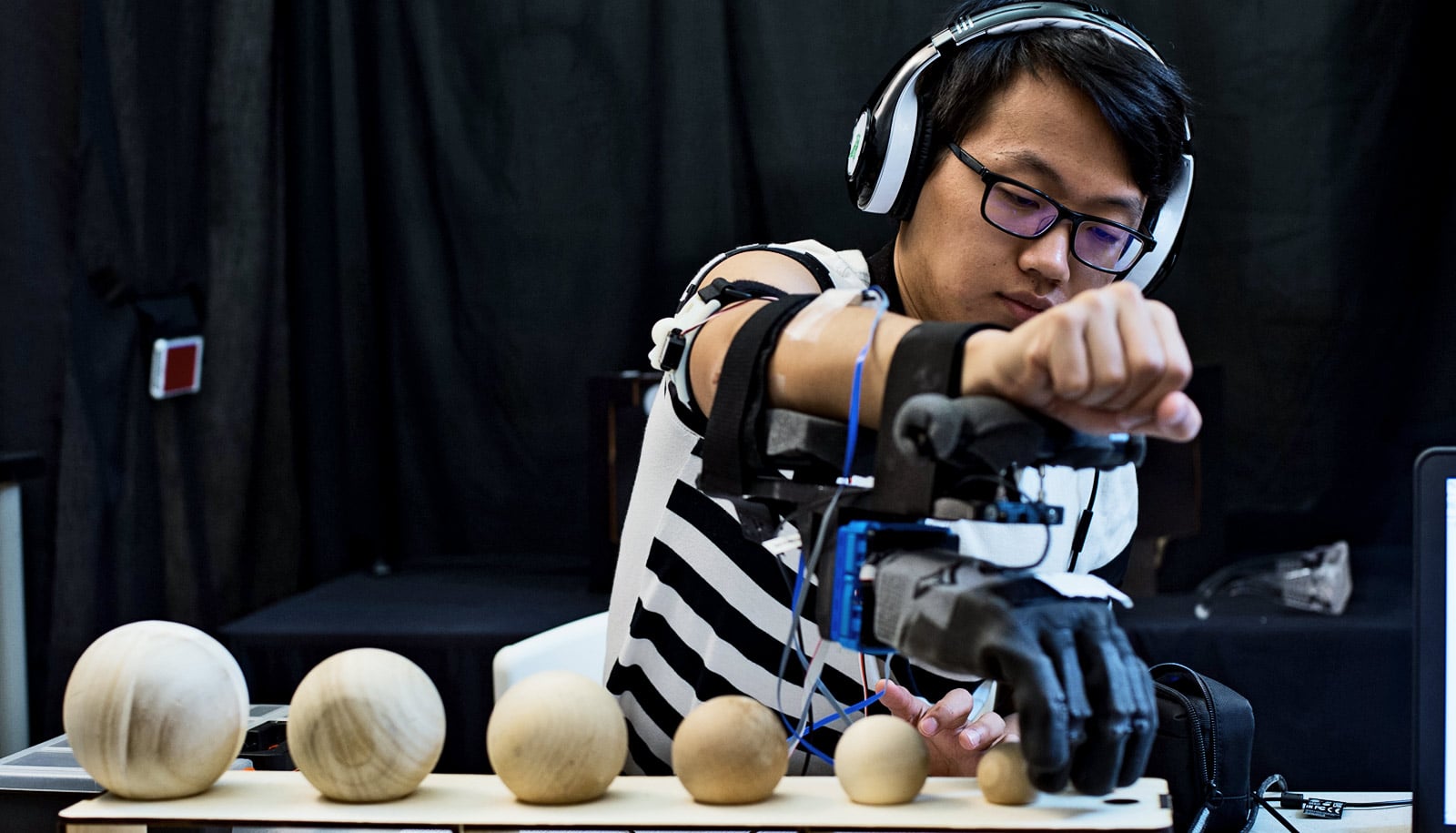

In a new paper to be presented June 7 at the World Haptics 2017 conference in Fürstenfeldbruck, Germany, O’Malley and colleagues demonstrate that 18 non-amputee test subjects performed significantly better on size-discrimination tests with a prosthetic hand when they received haptic feedback from a simple skin-stretch device on the upper arm. The study is the first to test a prosthesis in combination with a skin-stretch rocking device for proprioception.

An estimated 1.7 million people in the US live with the loss of a limb. Traditional prostheses restore some day-to-day function, but very few provide sensory feedback. For the most part, an amputee today must see their prosthesis to properly operate it.

Prosthetic hand links to nerves to make touch feel real

Improved computer processors, inexpensive sensors, vibrating motors from cellphones and other electronics have created new possibilities for adding tactile feedback, also known as haptics, to prosthetics, and O’Malley’s lab has done research in this area for more than a decade.

“We’ve been limited to testing haptic feedback with simple grippers or virtual environments that replicate what amputees experience,” she says. “That changed when I was contacted last year by representatives of Antonio Bicchi’s research group at [University of Pisa and the Italian Institute of Technology] who were interested in testing their prosthetic hand with our haptic feedback system.”

Skin stretching

In experiments at Rice beginning late last year, Pisan graduate student Edoardo Battaglia and Rice graduate student Janelle Clark tested MAHI’s Rice Haptic Rocker in conjunction with the Pisa/IIT SoftHand. They measured how well blindfolded subjects could distinguish the size of grasped objects both with and without proprioceptive feedback.

Watch: Guy uses robotic limb to play drums with 3 arms

While some proprioceptive technologies require surgically implanted electrodes, the Rice Haptic Rocker has a simple, noninvasive user interface—a rotating arm that brushes a soft rubber pad over the skin of the arm. At rest, when the prosthetic hand is fully open, the rocker arm does not stretch the skin. As the hand closes, the arm rotates, and the more the hand closes, the greater the skin is stretched.

“We’re using the tactile sensation on the skin as a replacement for information the brain would normally get from the muscles about hand position,” Clark says. “We’re essentially mapping from feedback from one source onto an aspect of the prosthetic hand. In this case, it’s how much the hand is open or closed.”

Flex a muscle, use the hand

Like the Rice Haptic Rocker, the SoftHand uses a simple design. Co-creator Manuel Catalano, a postdoctoral research scientist at IIT/Pisa, says the design inspiration comes from neuroscience.

“Human hands have many joints and articulations, and reproducing and controlling that in a robotic hand is very difficult,” he says. “When you have to grasp something, your brain doesn’t program the movement of each finger. Your brain has patterns, called synergies, that coordinate all the joints (in the hand).”

The Pisa/IIT SoftHand uses a control synergy just like people do in everyday life, Catalano says. “At the same time, thanks to the intrinsic capability of the SoftHand to adapt and deform with the environment, it is robust and able to grasp objects in many different ways.”

Battaglia says neurological studies have identified a set of synergies for the hand. People use these alone or in combination to perform tasks as simple as turning a doorknob and as complex as playing the piano. Grasping an object, like a cup or a coat hanger, is one of the simplest.

“Experiments show that one synergy explains more than 50 percent of all grasps,” he says. “SoftHand is designed to mimic this. It’s very simple. There is just one motor and one control wire to open and close all the fingers at once.”

In tests, subjects used the SoftHand to grasp objects of varying shapes and sizes, ranging from grapefruit-sized balls to coins (quarters). To close the hand, subjects simply flexed a muscle in their forearm. Electrodes taped to the arm picked up electric signals from the flexing muscle and transmitted those to the motor in the SoftHand.

For the size-discrimination test, subjects wore blindfolds while grasping two different objects. Researchers then asked them which of the two was larger. Without haptic feedback, the blindfolded subjects had to base their guesses on intuition. They chose correctly only about 33 percent of the time, which is what one would expect from a random choice. When they performed the same tests with feedback from the Rice Haptic Rocker, the subjects correctly distinguished the larger from smaller objects more than 70 percent of the time.

The researchers are following up to see if amputees get a similar benefit from using the haptic rocker in conjunction with the SoftHand.

“One of the things that makes the research we do in the MAHI lab unique is that we involve end-users from the very beginning, from the design and concept stage all the way to testing and evaluation of our systems,” O’Malley says. “Through our close collaborations in the Texas Medical Center, we are able to have those interactions with the end users—with patients, physical therapists, and doctors—all of the way through our design and evaluation process.”

The National Science Foundation and the European projects WEARHAP, SOFTPRO, and SoftHands supported the work.

Source: Rice University