Neuroscientists say a full understanding of the complexity of the human brain will require new research strategies that better simulate real-world conditions.

The authors of a new article say the brain’s ability to perform “approximate probabilistic inference” cannot be truly studied with simple tasks that are “ill-suited to expose the inferential computations that make the brain special.”

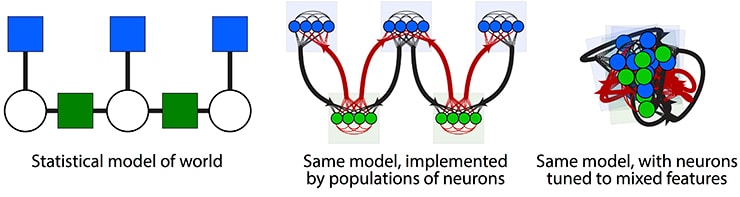

The article suggests the brain uses nonlinear message-passing between connected, redundant populations of neurons that draw upon a probabilistic model of the world. That model, coarsely passed down via evolution and refined through learning, simplifies decision-making based on general concepts and its particular biases.

The article, which lays out a broad research agenda for neuroscience, appears in the journal Neuron.

“Evolution has given us what we call a good model bias,” says Xaq Pitkow, an assistant professor in the neuroscience department and co-director of the Center for Neuroscience and Artificial Intelligence at Baylor University, as well as an assistant professor of electrical and computer engineering at Rice University.

“It’s been known for a couple of decades that very simple neural networks can compute any function, but those universal networks can be enormous, requiring extraordinary time and resources.

“In contrast, if you have the right kind of model—not a completely general model that could learn anything, but a more limited model that can learn specific things, especially the kind of things that often happen in the real world—then you have a model that’s biased. In this sense, bias can be a positive trait. We use it to be sensitive to the right things in the world that we inhabit. Of course, the flip side is that when our brain’s bias is not matched to reality, it can lead to severe problems.”

The researchers say simple tests of brain processes, like those in which subjects choose between two options, provide only simple results.

“Before we had access to large amounts of data, neuroscience made huge strides from using simple tasks, and they’ll remain very useful,” Pitkow says. “But for computations that we think are most important about the brain, there are things you just can’t reveal with some of those tasks.”

Brains of people with dyslexia don’t adapt to new stuff

Pitkow and her coauthor write that tasks should incorporate more diversity—like nuisance variables and uncertainty—to better simulate real-world conditions that the brain evolved to handle.

They suggest that the brain infers solutions based on statistical crosstalk between redundant population codes. Population codes are responses by collections of neurons that are sensitive to certain inputs, like the shape or movement of an object. Pitkow and her coauthor think that to better understand the brain, it can be more useful to describe what these populations compute, rather than precisely how each individual neuron computes it. Pitkow says this means thinking “at the representational level” rather than the “mechanistic level,” as described by the influential vision scientist David Marr.

The research has implications for artificial intelligence, another interest of both researchers.

“A lot of artificial intelligence has done impressive work lately, but it still fails in some spectacular ways,” Pitkow says.

“They can play the ancient game of Go and beat the best human player in the world, as done recently by DeepMind’s AlphaGo about a decade before anybody expected. But AlphaGo doesn’t know how to pick up the Go pieces. Even the best algorithms are extremely specialized. Their ability to generalize is often still pretty poor,” he says.

Artificial synapse could make computers more like brains

“Our brains have a much better model of the world; We can learn more from less data. Neuroscience theories suggest ways to translate experiments into smarter algorithms that could lead to a greater understanding of general intelligence.”

The McNair Foundation, the National Science Foundation, Britton Sanderford, the Intelligence Advance Research Projects Activity via the Department of Interior/Interior Business Center, the Simons Collaboration on the Global Brain, and the National Institutes of Health supported the research.

Source: Rice University